Industrial AI infrastructure is rapidly becoming the central challenge of the artificial intelligence economy. For several years, innovation focused mainly on algorithms and model capabilities. Today, however, the critical question is no longer only technological. It is industrial.

How can organisations operate artificial intelligence reliably at massive scale?

As AI adoption accelerates across industries, companies must deploy infrastructures capable of supporting unprecedented computational demand. Training advanced models now requires enormous datasets, thousands of GPUs and high-performance data architectures.

Therefore, the next wave of AI innovation will depend less on model design and more on the infrastructure that powers it.

Consequently, a new technological paradigm is emerging. Artificial intelligence is evolving from experimental technology into a full industrial stack. This stack combines computing power, high-speed data pipelines and advanced orchestration systems.

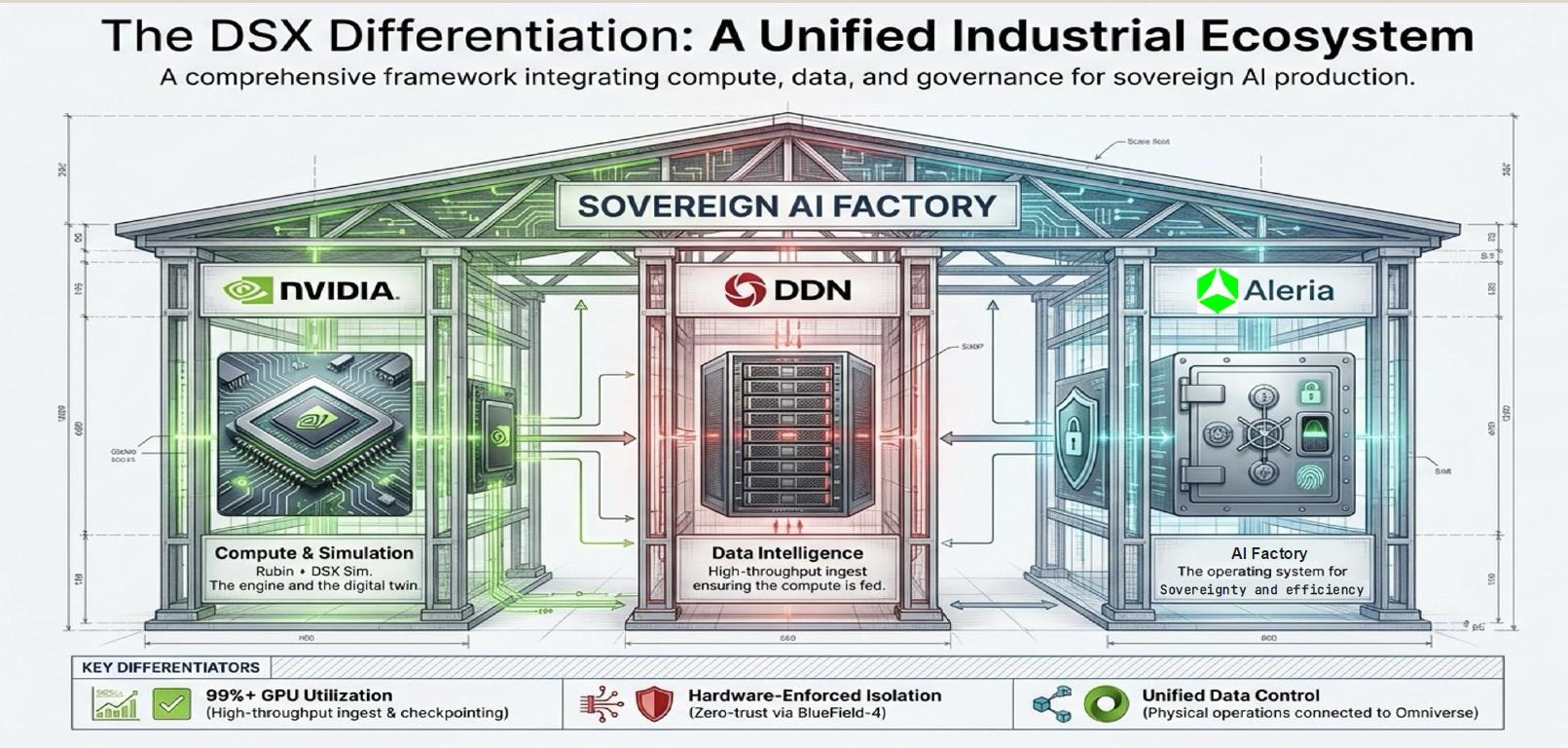

Within this transformation, a strategic trio is gaining attention: NVIDIA for computing power, DDN for data infrastructure and Aleria for AI factory orchestration.

Together, they illustrate how industrial AI infrastructure is beginning to take shape.

The industrial era of artificial intelligence

Artificial intelligence has long been associated with research labs and academic breakthroughs. However, the technology is now entering a new phase: industrialisation.

Modern AI models require vast training datasets and extremely powerful computing clusters. As a result, companies must build infrastructures comparable to large-scale digital factories.

Moreover, AI workloads generate continuous streams of data during both training and inference. These data flows require ultra-fast storage systems and high-bandwidth pipelines.

Without such capabilities, even the most advanced models remain underutilised.

Therefore, performance in artificial intelligence increasingly depends on three structural pillars. Organisations need substantial computing power. They must access data instantly. Finally, they require architecture capable of orchestrating both elements seamlessly.

These requirements are precisely what define industrial AI infrastructure.

NVIDIA: the engine powering AI computation

Over the past decade, NVIDIA has become the undisputed leader in AI computing infrastructure.

Its GPUs now power the majority of large-scale artificial intelligence systems worldwide. Research laboratories, hyperscale cloud providers and technology giants all rely on NVIDIA hardware.

Consequently, the company has positioned itself at the centre of the global AI ecosystem.

NVIDIA’s processors deliver the parallel computing capacity required for deep learning workloads. Large clusters of GPUs train models, process data and perform real-time inference.

However, these computing engines cannot operate efficiently without an equally powerful data infrastructure.

In fact, GPUs reach peak performance only when data flows continuously and at high speed.

This challenge opens the door for specialised infrastructure providers.

DDN: the data infrastructure behind AI performance

In large-scale artificial intelligence systems, data throughput is just as critical as computing power.

If data cannot move quickly enough, GPU clusters remain idle. As a result, infrastructure efficiency declines dramatically.

This issue explains the growing importance of companies such as DDN.

DataDirect Networks has built a strong reputation in high-performance storage systems designed for data-intensive environments. Its platforms support large computing clusters used in scientific research, supercomputing and artificial intelligence.

Moreover, DDN solutions enable ultra-fast access to massive datasets. This capability allows AI models to train and operate without data bottlenecks.

Therefore, data infrastructure has become a strategic layer within the modern AI stack.

In large AI environments, storage architecture now plays a role as critical as computing itself.

Aleria and the rise of AI factories

Between computing hardware and data infrastructure lies a third layer that often receives less attention: orchestration.

This layer determines how different technological components interact and operate at scale.

Aleria focuses precisely on this architectural dimension.

The company designs infrastructures that integrate GPU clusters, storage systems and software environments into coherent AI production platforms. These platforms function as AI factories, capable of running artificial intelligence workloads continuously.

In practical terms, Aleria transforms separate technologies into operational AI ecosystems.

If NVIDIA provides the engines and DDN supplies the data pipelines, Aleria builds the factory that coordinates the entire system.

Such orchestration is essential. Without it, infrastructure complexity can quickly become unmanageable.

Infrastructure becomes the strategic battlefield of AI

Artificial intelligence is entering a new stage of global technological competition.

Until recently, the race focused primarily on model development. Companies competed to build larger and more capable algorithms.

Today, however, infrastructure is becoming the decisive factor.

Countries, technology firms and emerging AI hubs are investing billions into computing clusters, data centres and specialised infrastructure.

Their objective is clear: secure the capacity required to run artificial intelligence at industrial scale.

Consequently, control over industrial AI infrastructure is increasingly viewed as a strategic advantage.

The future of artificial intelligence will not depend solely on model innovation. Instead, it will depend on the infrastructures capable of powering those models across entire economies.